Today, a global coalition of 53 civil society organisations have joined together to call on Members of the European Parliament to use their democratically-elected powers to protect us all from biometric mass surveillance practices. The EU must not legitimise these dangerous practices. Otherwise, EU lawmakers risk setting a precedent for uses of AI-based technology which could destroy people’s anonymity forever and suppress a broad range of our rights and freedoms.

Brussels, Tuesday 10 May, 2022

Dear honourable Members of the European Parliament,

We write to you today as 53 organisations to ask: Will you stand up for our rights by prohibiting biometric mass surveillance in the Artificial Intelligence Act?

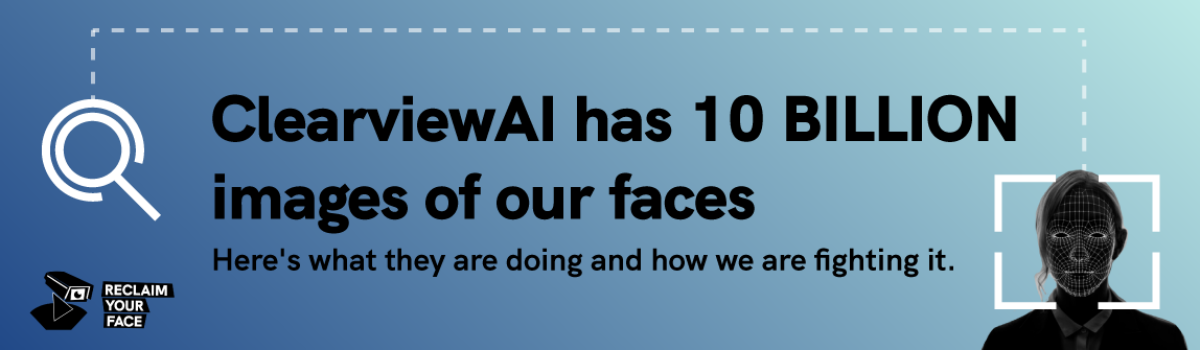

In Europe and across the world, the use of remote biometric identification (RBI) systems such as facial recognition, in our publicly accessible spaces, represents one of the greatest threats to fundamental rights and democracy that we have ever seen.

The remote use of such systems destroys the possibility of anonymity in public, and undermines the essence of our rights to privacy and data protection, the right to freedom of expression, rights to free assembly and association (leading to the criminalisation of protest and causing a chilling effect), and rights to equality and non-discrimination.

Without an outright ban on the remote use of these technologies in publicly accessible spaces, all the places where we exercise our rights and come together as communities will be turned into sites of mass surveillance where we are all treated as suspects.

These harms are not hypothetical. Uyghur Muslims have been systematically persecuted by the Chinese government through the use of facial recognition. Pro-democracy protesters and political opponents have been suppressed or targeted in Russia, Serbia and Hong Kong through the use – and in some cases, even just the fear of the use of – RBI in publicly-accessible spaces. And many people have been wrongfully and traumatically arrested around the world.

In response to the ever-increasing proliferation of these uses and their harms, people are pushing back and calling for prohibitions. More than 24 US states have taken steps against facial recognition or other forms of biometric mass surveillance. In South America, two recent rulings in São Paulo and Buenos Aires have ordered the suspension of facial recognition systems.

Some of the world’s biggest providers of biometric surveillance systems – Microsoft, Amazon and IBM – have even adopted self-imposed moratoriums due to the major risks and harms that they know their systems perpetuate; and Facebook has deleted its mass facial image database.

Despite the strong protections afforded to biometric data in EU data protection law, we see companies and public authorities systematically misusing “consent” and vague security justifications as a basis for the use of facial recognition and other biometric systems in ways that amount to inherently disproportionate mass surveillance practices.

While democratic countries around the world are taking steps to protect their communities, the EU is heading in the opposite direction.

A clear, unambiguous prohibition is needed in the AI Act to put a stop to the dangerous status quo. In 2021, the European Parliament adopted a powerful stance against biometric mass surveillance practices in the AI in criminal law report, which calls for: “a ban on any processing of biometric data, including facial images, for law enforcement purposes that leads to mass surveillance in publicly accessible spaces” (Article 31).

The AI Act is the obvious way for this important European Parliament resolution to be translated into binding, impactful law.

The urgent need for further action has also been recognised at EU Member State level. Italy has introduced Europe’s first moratorium on public facial recognition. The German coalition government has called for an EU-wide ban on biometric mass surveillance practices. Portugal dropped a law which would have legalised some biometric mass surveillance practices. And the Belgian Parliament is considering a moratorium on biometric surveillance.

Will you make (the right kind of) history?

There is already significant evidence that European residents havebeen systematically subjected to biometric mass surveillance practices. From football fans, to school children, to commuters, to shoppers, to people visiting LGBTQ+ bars and places of worship, the harms are real and prevalent. Via the Reclaim Your Face campaign, over 70,000 EU citizens urge you and your fellow lawmakers to better protect us from these undemocratic and harmful biometric systems.

Around the world, over 200 civil society organisations, from Burundi to Taiwan, have signed a letter calling for a global ban on biometric surveillance. As the first region to comprehensively regulate artificial intelligence, the EU’s actions – or inaction – will have major ramifications on biometric mass surveillance practices in every corner of the globe.

While dozens of US states are learning from horrendous mistakes such as the facial recognition-enabled suppression of Black Lives Matter protesters, governments in India, China and Russia are moving in the opposite direction. Which side of history will the EU be on: legitimising authoritarian technological surveillance, or choosing fundamental rights?

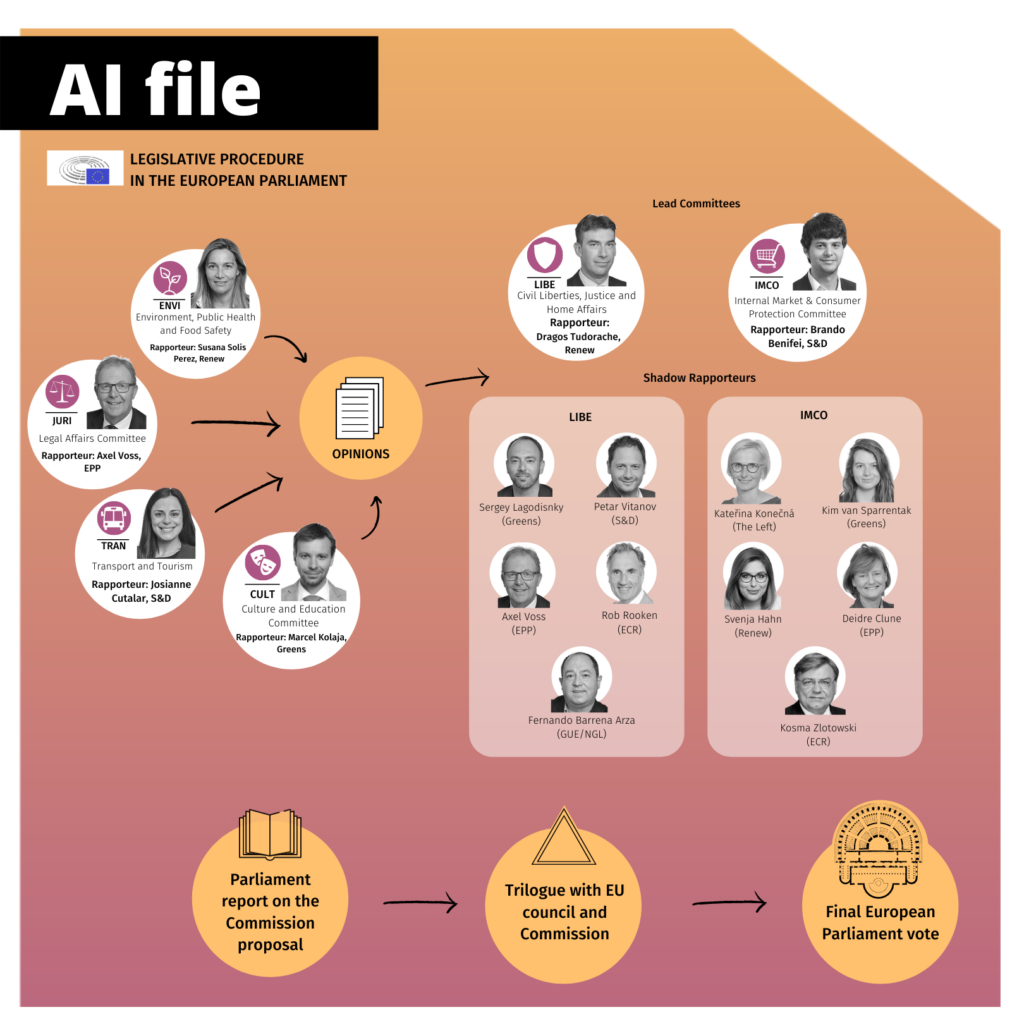

How can we make this a reality in the AI Act?

The AI Act must prohibit all remote(i.e. generalised surveillance) uses of biometric identification (RBI) in publicly-accessible spaces. This means that uses like unlocking a smartphone or using an ePassport gate would not be prohibited. While Article 5(1)(d) already aims to prohibit some uses of RBI, its scope is so narrow and contains so many exceptions that it practically provides a legal basis for practices that should, in fact, already be prohibited under existing data protection rules.

We therefore call on you to propose amendments to Article 5(1)(d) which would:

- Extend the scope of the prohibition to cover all private as well as public actors;

- Ensure that all uses of RBI (whether real-time or post) in publicly-accessible spaces are included in the prohibition; and

- Delete the exceptions to the prohibition, which independent human rights assessments confirm do not meet existing EU fundamental rights standards.

To ensure a comprehensive approach to the protection of biometric data, we additionally urge you to use the opportunity provided by the AI Act to put a stop to discriminatory or manipulative forms of biometric categorisation, and to properly address the risks of emotion recognition.

The EU aims to create an “ecosystem of trust and excellence” for AI and to be the world leader in trustworthy AI. Accomplishing these aims will mean putting a stop to applications of AI that undermine trust, violate our rights, and turn our public spaces into surveillance nightmares. We can promote AI that really serves people, while stamping out the most dangerous applications of this powerful technology.

That’s why the EU’s way must be to truly put people at the heart, and to put forward amendments to the IMCO-LIBE report on the AI Act which willensure a genuine ban on biometric mass surveillance practices.

Signed,

Reclaim Your Face

Organisational signatories:

Access Now (International)

AlgorithmWatch (European)

Alternatif Bilisim (AIA- Alternative Informatics Association) (Turkey)

anna elbe – Weitblick für Hamburg (Germany)

ARTICLE 19: Global Campaign for Free Expression (International)

Asociatia pentru Tehnologie si Internet – ApTI (Romania)

Barracón Digital (Honduras)

Big Brother Watch (UK)

Bits of Freedom (the Netherlands)

Blueprint for Free Speech (International)

Center for Civil Liberties (Ukraine)

Chaos Computer Club (Germany)

Civil Liberties Union for Europe (European)

D3 – Defesa dos Direitos Digitais (Portugal)

Digital Rights Watch (Australia)

Digitalcourage (Germany)

Digitale Freiheit (Germany)

Digitale Gesellschaft (Germany)

Digitale Gesellschaft CH (Switzerland)

Državljan D / Citizen D (Slovenia / European)

Eticas Foundation (European / International)

European Center For Not-For-Profit Law Stichting (ECNL) (European)

European Digital Rights (EDRi) (International)

European Disability Forum (EDF) (European)

Fachbereich Informatik und Gesellschaft, Gesellschaft für Informatik e.V. (Germany)

Fair Trials (International)

Fight for the Future (United States)

Football Supporters Europe (FSE) (European)

Hermes Center (Italy)

Hiperderecho (Perú)

Homo Digitalis (Greece)

Internet Law Reform Dialogue (iLaw) (Thailand)

Internet Protection Society (Russia / European)

Intersection Association for Rights and Freedoms (Tunisia)

IT-Pol Denmark (Denmark)

International Legal Initiative (Kazakhstan)

Iuridicum Remedium (IuRe) (Czech Republic)

JCA-NET (Japan)

Korean Progressive Network Jinbonet (Republic of Korea)

La Quadrature du Net (France)

Lady Lawyer Foundation (International)

LaLibre.net Tecnologías Counitarias (Ecuador / Latin America)

Ligue des droits de l’Homme (LDH) (France)

Ligue des droits humains (Belgium)

LOAD e.V. – Association for liberal internet policy (Germany)

Masaar – Technology and Law Community (Egypt)

Panoptykon Foundation (Poland)

Privacy International (International)

Privacy Network (Italy)

Statewatch (Europe)

Usuarios Digitales (Ecuador)

Wikimedia Deutschland (Germany / European)

Wikimedia France (France / European)

Individual signatories:

Douwe Korff, Emeritus Professor of International Law

Dr Vita Peacock, Anthropologist

Edson Prestes, Full Professor, Federal University of Rio Grande do Sul (Brazil)

For example: https://www.aclu.org/news/privacy-technology/i-did-nothing-wrong-i-was-arrested-anyway; https://www.nytimes.com/2020/12/29/technology/facial-recognition-misidentify-jail.html; https://www.wired.com/story/wrongful-arrests-ai-derailed-3-mens-lives/; https://edri.org/our-work/dangerous-by-design-a-cautionary-tale-about-facial-recognition/; https://www.law.georgetown.edu/privacy-technology-center/publications/garbage-in-garbage-out-face-recognition-on-flawed-data/

The General Data Protection Regulation, Article 9, paragraph 4, foresees additional protections of biometric data: “Member States may maintain or introduce further conditions, including limitations, with regard to the processing of … biometric data”.

This must be supported by a new Recital to better define “remote” use cases as those where cameras/devices are installed at a distance that creates the capacity to scan multiple persons, which in theory could identify one or more of them without their knowledge. Warning notices do not annul such a definition.