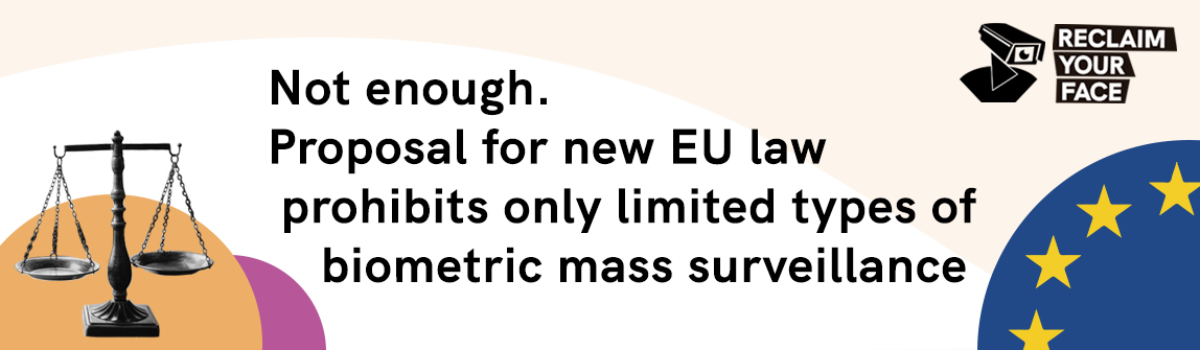

European Commission proposal for new AI Regulation shows exactly why we are fighting to ban BMS

Today’s European Commission proposal for a Regulation on artificial intelligence (AI) highlights the seriously risky business of biometric mass surveillance (BMS), and proposes a new rule to prohibit law enforcement authorities from doing some of these practices – a step in the right direction.

In particular, we are relieved to see the Commission acknowledge that biometric mass surveillance practices have a seriously intrusive impact on people’s rights and freedoms, as well as the fact that the use of these technologies may “affect the private life of a large part of the population and evoke a feeling of constant surveillance (…)”. These threats are exactly what the Reclaim Your Face coalition has been fighting to ban throughout our campaign, and it shows that our arguments have hit home.

However, we are disappointed that today’s proposal does not go far enough to protect people from the wide range of biometric mass surveillance practices that we already see in motion across Europe. As a result, the proposal contradicts itself by permitting certain forms of biometric mass surveillance that it has already acknowledged are incompatible with our fundamental rights and freedoms in the EU!

Specifically, the proposal prohibits “real-time” “remote biometric identification” for law enforcement purposes. However, some of the biggest problems with the proposal’s approach that we see are:

- The wording is often very vague and it contains a number of concepts which are poorly defined, leaving vast room for interpretation and discretion. This recreates many of the problems in existing data protection laws that led us to call for a new ban, and also undermines legal certainty for everyone;

- The prohibition only applies to law enforcement purposes, failing to prohibit equally invasive and dangerous uses by other government authorities as well as by private companies, which nevertheless constitute biometric mass surveillance;

- The prohibition on law enforcement uses has many exceptions which are defined too broadly and could seriously undermine the purpose of the prohibition. This leaves room for continued biometric mass surveillance of the public space by law enforcement authorities;

- Only “real-time” identification for law enforcement purposes is prohibited. This means it could be possible to identify people after the data has been collected (“post” / after the event) – meaning for example, that police wouldn’t be banned from using notorious ClearviewAI databases;

- All other ‘biometric identification’ practices, plus the often pseudo-scientific and highly discriminatory practice of biometric ‘categorisation’, are allowed under the proposal, which says that they are not prohibited.

The Reclaim Your Face coalition, now made up of 60 (digital) human rights and social justice groups working across Europe, united in October 2020 to fight biometric mass surveillance in Europe. The coalition pointed at this practice being invasive, discriminatory and undemocratic.

From facial recognition in parks and schools, to “smart” biometrics used to target protesters, to the systemic and oppressive surveillance of marginalised groups, we see no place in our societies for biometric technologies to be used in ways that treat us all as suspects. Our evidence has revealed that current abuses are vast and systemic, and our analysis has shown that the only way to protect the rights of people in Europe is to ban these practices. We should not have to look over our shoulders wherever we go.

Read the EDRi network’s first fundamental rights analysis of the wider proposal – including serious concerns about use cases that should have been prohibited but weren’t, and provisions that allow AI developers to ‘mark their own homework’.

What is civil society across Europe saying about biometric mass surveillance?

Biometric mass surveillance reduces our bodies to walking barcodes with the intention of judging the links between our data, our physical appearance and our intentions. We should protect this sensitive data because we only have one face, which we cannot swap or leave at home. Once we give up this data we will have lost all control.

Lotte Houwing – Bits of Freedom (the Netherlands)

Not all technological developments are compatible with social progress. Developments in artificial intelligence (a.k.a. machine learning) haven’t made mass surveillance any less harmful, they’ve just made it cheaper and easier to deploy. Whether it’s being done by thousands of police officers or one AI system, mass surveillance is incompatible with human rights, and applications of AI that enable and incentivise it need to be banned.

Daniel Leufer – Access Now (Global)

The Hellenic Police are significantly expanding their technological capabilities, including deploying facial recognition and other biometric processing technologies in public spaces. The risk of increased authoritarian societal control is real. We demand public spaces where everyone can express themselves freely and without fear. This is the EU that we envision.

Eleftherios Chelioudakis – Homo Digitalis (Greece)

If Europe will not block biometric surveillance, the risk of mass surveillance in our cities would be extremely real. Technology cannot have the power to define who we are, nor to control us. Privacy is power. Reclaiming possession of the city and the public spaces is the first step to reclaim our faces.

Laura Carrer – Hermes Center for Transparency and Digital Rights (Italy)

One of the bigest paradoxes is that the same politicians, who declare to respect diversity and the narrative about “expressing your true self” and “embracing your individuality”, support BMS, technology that is trained to detect and alert about every drift from the standard look or behaviour. How can we be ourselves, if automatically it can turn us into suspects?

Maria Wróblewska – Fundacja Panoptykon (Poland)

Biometric mass surveillance is not a dystopian fantasy, but reality today: Shady companies like Clearview AI and pimeyes are assembling illegal face databases already, building networked, centralised, and all-encompassing recordings of our everyday life. As long as governments and companies across Europe use these unlawful tools, we are living under big brother. A real ban on biometric mass surveillance is long overdue.

Matthias Marx – Chaos Computer Club (Germany)

The use of BMS is dangerous and bears restrictions of freedom for all of us. It is no longer a well-kept secret that BMS has strong biases (see skin colors, genders) and high error rates. Using and filtering humans by metadata, which is inevitably linked to biometric features, leads to racial profiling and cannot be accepted in a society as ours honoring human rights. Such invasive methods and systems have no place in an EU that strives for the well-being of all.

Nina Spurny – epicenter.works (Austria)

Further reading:

- EDRi’s press release on the AI legislation: https://edri.org/our-work/eus-ai-proposal-must-go-further-to-prevent-surveillance-and-discrimination/

- EDRi’s Ban Biometric Mass Surveillance position paper: https://edri.org/wp-content/uploads/2020/05/Paper-Ban-Biometric-Mass-Surveillance.pdf

- Letter to Commissioner Reynders from 56 civil society organisations to ban biometric mass surveillance: https://reclaimyourface.eu/letter-commission-justice-biometrics-ai/

- Statement from the European Data Protection Supervisor (EDPS) confirming that biometric identification can single out one individual amongst many, “even when commonly used identifiers are not available”: https://edps.europa.eu/sites/edp/files/publication/13-03-15_comments_dp_package_en.pdf

- Letter from 62 civil society organisations for red lines: https://edri.org/our-work/civil-society-call-for-ai-red-lines-in-the-european-unions-artificial-intelligence-proposal/

- Letter from 116 MEPs calling for fundamental rights: https://edri.org/our-work/meps-agree-we-need-ai-red-lines-to-put-people-over-profit/

- Evidence – Biometric mass surveillance across Europe: https://reclaimyourface.eu/evidence-in-eu-countries/

5 Comments